Research

Our research evolves around Human-Centered Technologies and AI systems that sense and infer the user's cognitive state, the level of task-related expertise, actions, and intentions based on multimodal data and provide information for media and assistive technologies in many activities of everyday life, and especially in the context of learning.

AI Literacy for Children

This project aims to promote foundational AI Literacy among young learners in primary education. By introducing core concepts such as algorithms, machine learning, decision trees, and ethical considerations, the workshop encourages responsible and critical engagement with AI from an early age. Through hands-on and playful activities children are empowered to understand how AI systems work and how biases can arise.

ArtisanXR: Immersive Learning Experience with Conversational AI

ArtisanXR aims to safeguard and promote intangible cultural heritage (ICH) especially extinguished Art and Craftsmanship, by harnessing cutting-edge technologies to preserve, document, and disseminate the knowledge, skills, and expressions that define the rich cultural identities of communities worldwide. This project fosters cross-cultural understanding and leverages conversational AI agents to inform cultural and historical backgrounds and learning guidance. By creating a comprehensive digital archive, we hope that this project can facilitate knowledge preservation and inspire future generations through accessible and engaging learning experiences.

Assisting the remote video learner with self-regulation support

Online video learning is becoming increasingly important in educational contexts. However, remote video learning challenges not only students’ self-regulation, but also teachers‘ abilities to detect these self-regulation problems. The project addresses this problem at the interface of psychology, educational science, and computer science. To this end, potential problems of self-regulation will be automatically detected and measures to support them, for example by optimizing instructional videos, shall be developed.

DigiProMIN

DigiProMIN (digitalisierungsbezogene und digital gestützte Professionalisierung von MIN-Lehrkräften) is a collaborative project involving eight national universities, aiming to develop a digitalization-related framework to promote the professional development of STEM teachers.

EduPEF: Educational Personalized Feedback for Mathematics

This tool is designed to leverage Large Language Models (LLMs) to generate personalized feedback for mathematical multiple-choice questions, enhancing the learning experience. By comparing stock, automatic, and adaptive feedback, it evaluates the LLMs’ potential to provide student-specific feedback tailored to individual student profiles. The findings aim to guide the development of more effective, adaptive learning tools that offer targeted support for different student profiles, enhancing overall educational outcomes.

OLLE: Your AI-Powered Oral Examiner

OLLE is an AI-powered exam preparation tool designed to help students study effectively by highlighting what they need to know and where they need extra practice, thereby building their confidence for oral exams. By generating personalized questions from uploaded study materials, OLLE creates an interactive, exam-like environment where students rehearse articulating structured and concise answers.

PEER: An AI-based educational platform aimed at enhancing essay writing skills

PEER (Paper Evaluation and Empowerment Resource) is a tool designed to assist students in writing essays, from primary school to university level and across various genres. The tool leverages AI to provide personalized feedback and improvement suggestions based on the analysis of essays submitted either by photograph or direct text input, while preserving anonymity of the data collected for continuous improvement.

Privacy Preserving Eye-tracking Applications

We aim at privacy-preserving applications of eye tracking using different techniques including differential privacy, federated learning, domain adaptation, randomized encryption and many more techniques. An emphasis of our work is the application in virtual environments. Part of this work will be integrated into our VR classroom project.

SAM - your AI mentor for academic success

SAM is more than just a tool; it's your personal AI mentor, ready to guide you through complex subjects and clarify doubts in real-time.

Our cutting-edge web application is designed to transform the way you study, making every learning session more efficient, interactive, and tailored to your needs. SAM leverages advanced AI technology to offer a personalized learning experience. By allowing you to interact directly with your study material, SAM ensures that no question goes unanswered, and no topic is left unclear.

SARA: Smart AI Reading Assitant

SARA wurde entwickelt, um Schülern dabei zu helfen, ihre Lese- und Verständnisfähigkeiten zu verbessern. Dieses Tool nutzt künstliche Intelligenz in Verbindung mit der Erfassung von Augenbewegungen, um Stellen im Text zu identifizieren, an denen das Verständnis möglicherweise schwierig ist, und bietet individuell angepasste Hilfestellungen.

SolmiBird: Intelligent Vocal Trainer for Confident Singing

SolmiBird explores how AI can enhance music education by providing real-time, interactive vocal feedback. Recognizing that many younger students struggle with singing confidence due to limited access to personalized guidance, we developed SolmiBird as a gamified singing tutor that supports vocal teachers and motivates students to practise. It creates an engaging and enjoyable training experience by offering immediate feedback on pitch accuracy and vocal stability to help students build confidence and improve their vocal skills through regular, self-guided practice.

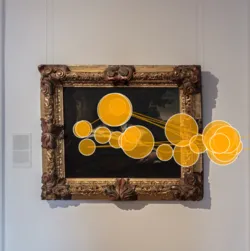

The Museum Gaze

Recent studies have been using cutting-edge mobile eye tracking technology to capture eye movements in natural, uncontrolled settings such as museums. Our collaboration with the Cognitive Research in Art History (CReA) Lab at the University of Vienna aims to comprehensively investigate this complex phenomenon, which has long been of interest in art history and psychology. We are conducting four distinct research studies, each with a unique focus on different aspects of the museum gaze, in partnership with the experienced curatorial team at the Belvedere Museum in Vienna.

VIVA - Vision Optics With Integrated VCSELS and Autofocal Lenses

At VIVA, we're exploring how eye-tracking can be smarter, lighter, more private—and more human. Our mission is to create a new kind of eye-tracking system that fits seamlessly into everyday life, designed with people at the center. This project is in collaboration with 13 partners from 7 different countries.

What makes VIVA different? We’re building a camera-free eye-tracking solution that uses laser feedback interferometry (LFI) and meta-optics—cutting-edge technologies that allow for fast, accurate tracking (around 1 kHz) without compromising on comfort or privacy. Our goal is to keep the whole system under 40 grams, making it wearable all day, like a regular pair of glasses.

VR Classroom

The VR classroom project aims at an immersive virtual learning environment that allows data tracking in multiple modalities such as eye tracking and hand tracking with the help of corresponding devices and sensors, especially Head Mounted Display (HMD).